Your Data Left the Building (And You Didn't Notice)

Here's a question most European businesses can't answer: when your team uses an AI tool — a chatbot, a transcription service, an AI assistant — where does that data actually go?

Not where the privacy policy says it goes. Where it actually goes.

The invisible pipeline

Until recently, every major cloud AI provider processed data exclusively in US-based data centers — Virginia, Oregon, Iowa. Some now offer EU data residency for enterprise customers (OpenAI launched theirs in early 2025), but the default path for most businesses — especially anyone using free, Plus, or standard API tiers — is still the same: your data crosses the Atlantic. When a Norwegian staffing company pastes a candidate's CV into ChatGPT, it lands on a US server. When a German hospital uses a cloud AI to summarize patient notes, those notes leave Europe in milliseconds.

A 2024 Cisco survey found that 48% of businesses admitted to entering non-public company information into generative AI tools. Samsung banned ChatGPT entirely after engineers leaked proprietary semiconductor code through it. Cyberhaven found that 11% of data pasted into ChatGPT by employees is confidential.

Most companies have no visibility into this. Zero. Their employees use AI tools the same way they use Google — reflexively, without thinking about where the query goes.

What "GDPR compliant" actually means

Most AI vendors claim GDPR compliance. Here's what that typically means in practice: they've signed Standard Contractual Clauses (SCCs) and promise to handle European data according to GDPR principles. The data still leaves Europe. It still gets processed on US servers. It's still technically accessible under US law, including FISA Section 702, which allows US intelligence agencies to compel access to data held by American companies — without notifying the data subject.

The European Court of Justice has struck down two transatlantic data transfer frameworks already — Safe Harbor in 2015, Privacy Shield in 2020 — precisely because US surveillance law is fundamentally incompatible with EU privacy rights. The current EU-US Data Privacy Framework is widely expected to face the same legal challenge.

When a vendor says "GDPR compliant," what they often mean is "we've done the paperwork." What they don't mean is "your data stays in Europe." Those are very different things.

The fines are real

GDPR enforcement has accelerated dramatically. In 2023, Meta was fined €1.2 billion — the largest GDPR fine ever — for transferring European user data to US servers. Not for a breach. Not for negligent security. For the transfer itself.

Italian regulators temporarily banned ChatGPT in 2023 over data protection concerns. The Norwegian Data Protection Authority fined Grindr €5.8 million for sharing user data without valid consent. French regulators fined Clearview AI €20 million for scraping facial data from European citizens.

GDPR fines can reach 4% of global annual revenue or €20 million, whichever is higher. For a mid-size European company doing €50 million in revenue, that's a potential €2 million fine. For a single data transfer violation.

The gap between perception and reality

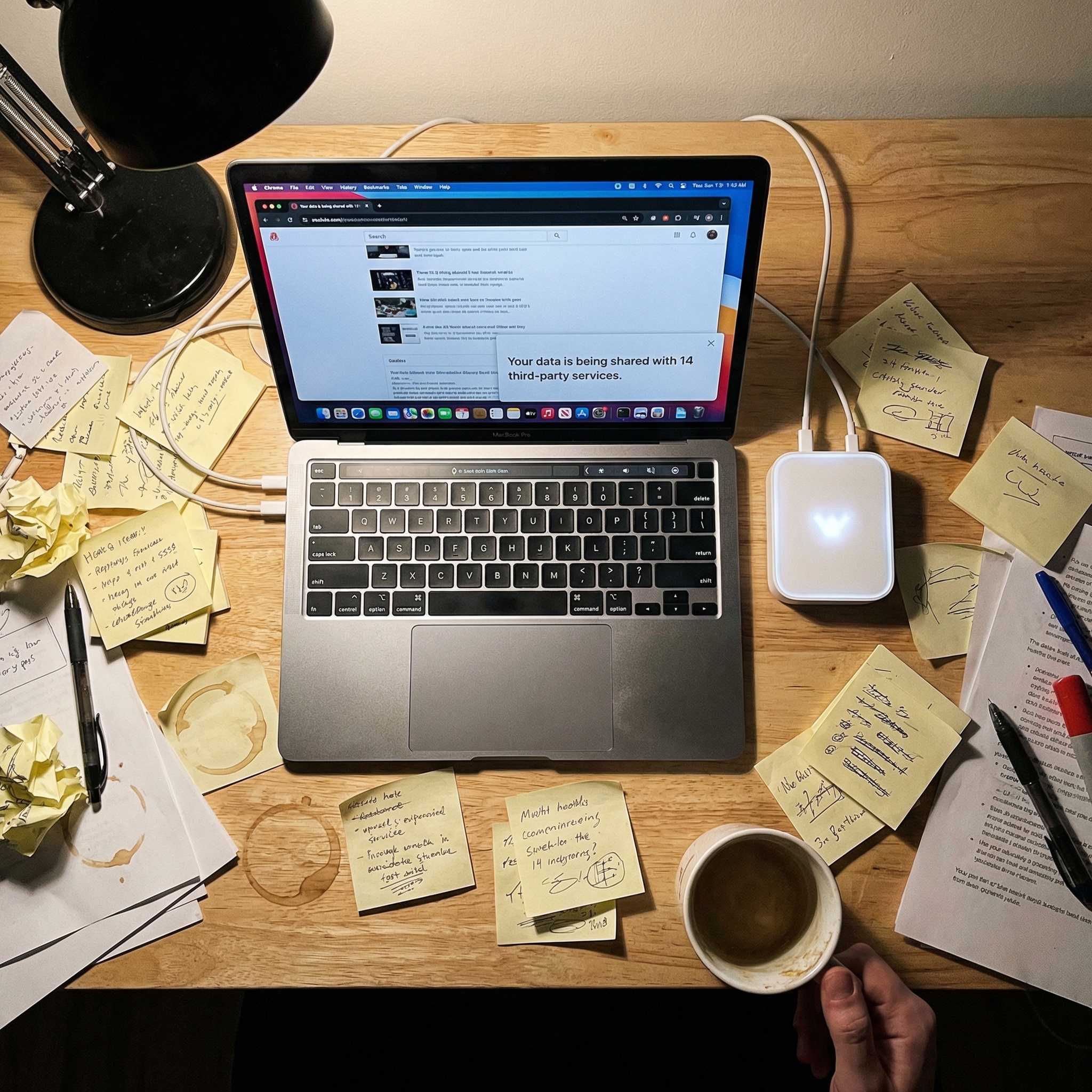

Most organizations believe they're GDPR compliant. But when Deloitte audited actual compliance practices, they found only 34.5% could defensibly demonstrate it. Meanwhile, 27% of organizations have banned generative AI tools entirely over privacy concerns, according to Cisco's 2024 privacy benchmark. The gap between perceived and actual compliance is enormous — and AI tools are making it worse.

Every AI interaction is a data processing event. Every prompt potentially contains personal data. Every response is generated on infrastructure that the business doesn't control, can't audit, and often can't even locate.

Most companies treat AI tools like utilities. Plug in, use, don't think about the wiring. But the wiring matters. It's the difference between a €0 compliance cost and a €2 million fine.

The only architecture that solves this

There's exactly one way to guarantee that data doesn't leave your jurisdiction: don't send it anywhere. Process it locally. On hardware you own, in a building you control, on a network that doesn't touch the public internet.

On-premises AI isn't a preference. For European businesses handling personal data — which is most of them — it's the only architecture that eliminates transfer risk entirely. No SCCs needed. No reliance on geopolitical frameworks that keep getting invalidated. No exposure to foreign surveillance law.

The data comes in. The AI processes it. The data stays. That's not a feature. That's the baseline for what compliance actually requires.